MATH 625: Mathematical Principles of Artificial Intelligence

Fall 2026

This course is designed to help students move beyond treating machine learning as a “black box”. Instead, we will build a rigorous mathematical foundation for modern AI systems, starting from first principles and culminating in the study of Geometric Deep Learning.

Course Philosophy and Structure

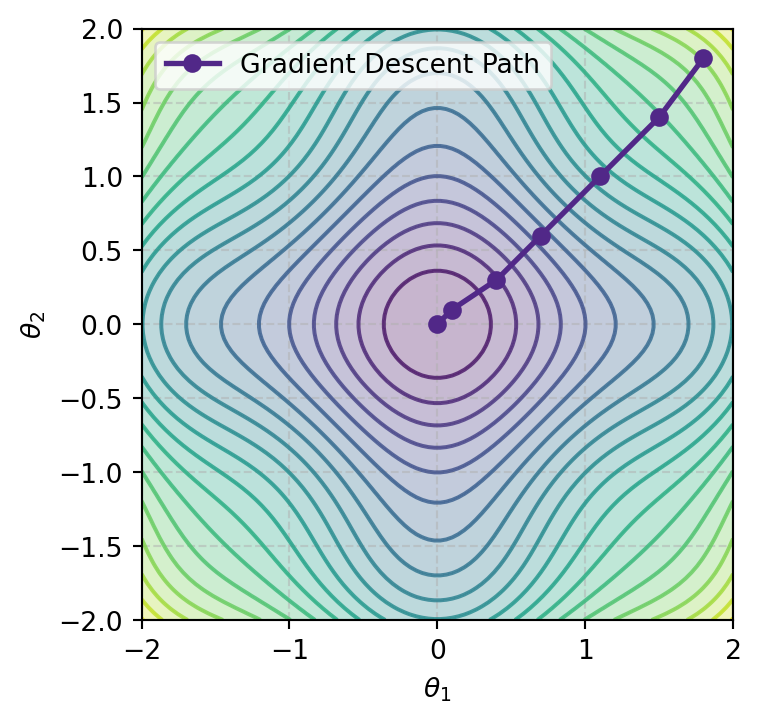

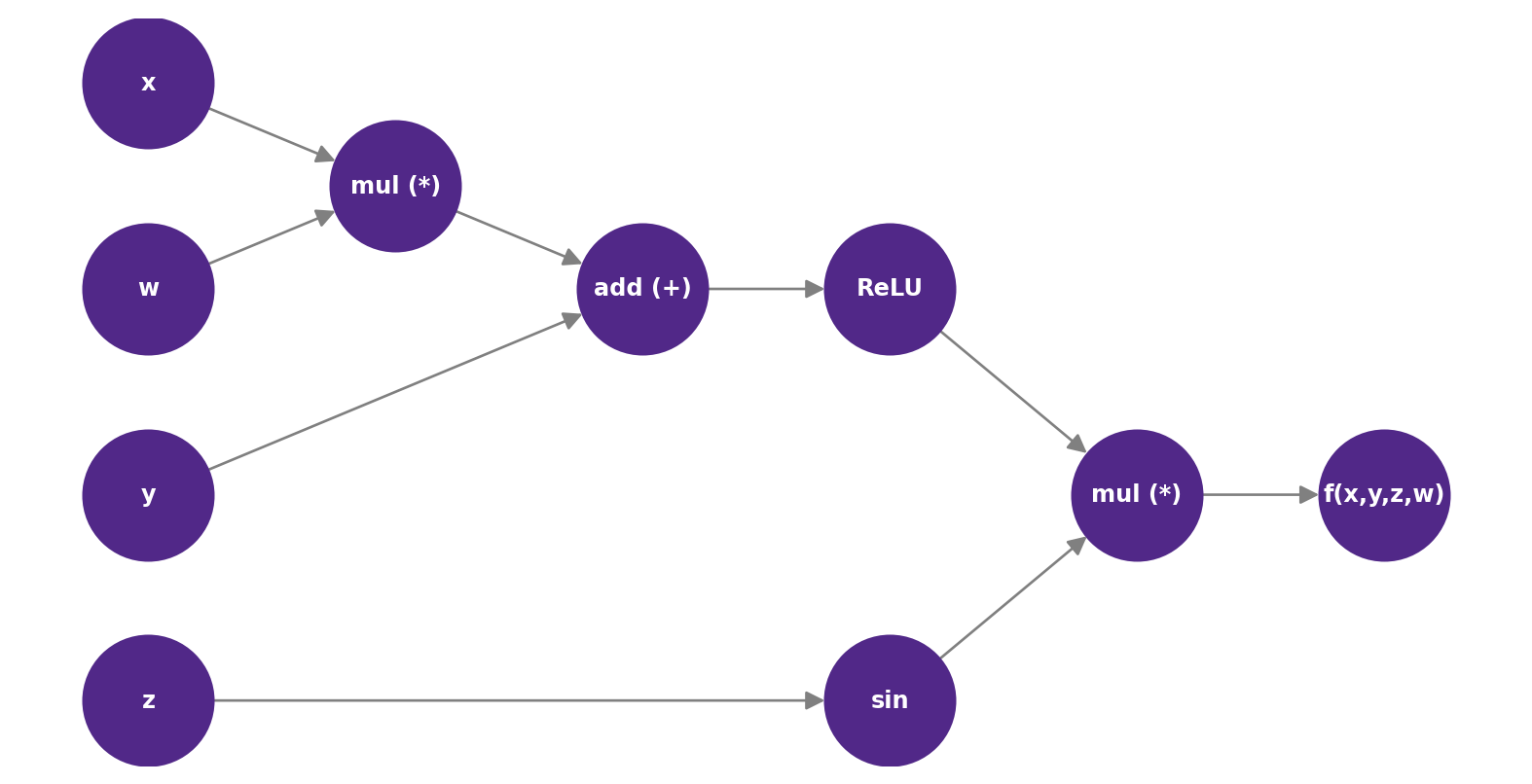

The curriculum blends mathematical theory with hands-on implementation. We begin with the fundamental language of data—linear algebra and optimization. Before working with standard neural network libraries, we will implement core algorithms like gradient descent and automatic differentiation from scratch. This approach ensures that students not only understand how to use these tools but also why they work. Then we will move on to more advanced topics like invariance, equivariance, and graph neural networks, which are essential for understanding how to design models that can learn from complex data structures.

Key Learning Milestones

Optimization: Developing a mechanical intuition for how models “learn” by tuning parameters through gradient descent.

Automatic Differentiation: Implementing the core technology that enables modern neural network training.

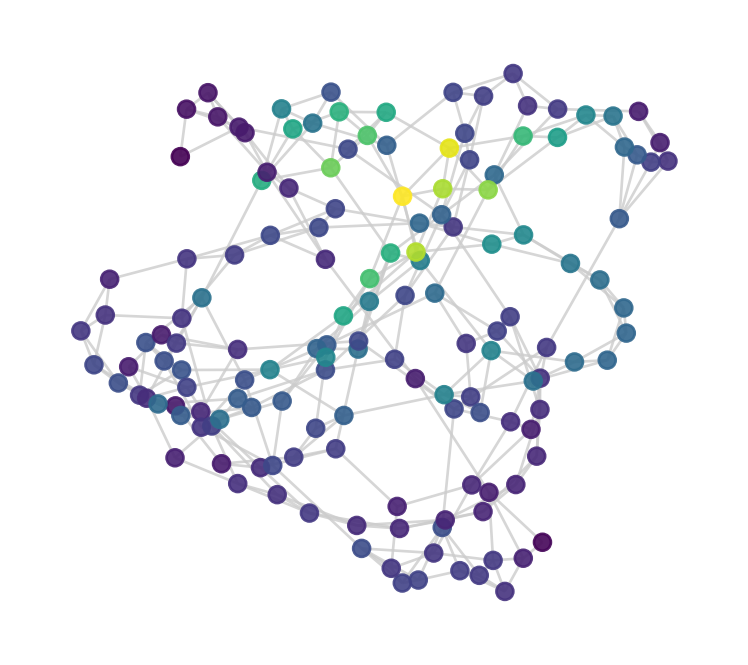

Geometric Deep Learning: Exploring invariance and equivariance to design models that respect the underlying symmetry of data.

Graph Neural Networks (GNNs): Constructing and training a GNN to solve relational data problems.

Technical Details

Prerequisites: MATH 221 or 251, and introductory programming (CIS 209 or equivalent).

Format: Asynchronous online course.

Assessment: The course is project-heavy, featuring three major coding projects that build toward a final Graph Neural Network implementation.